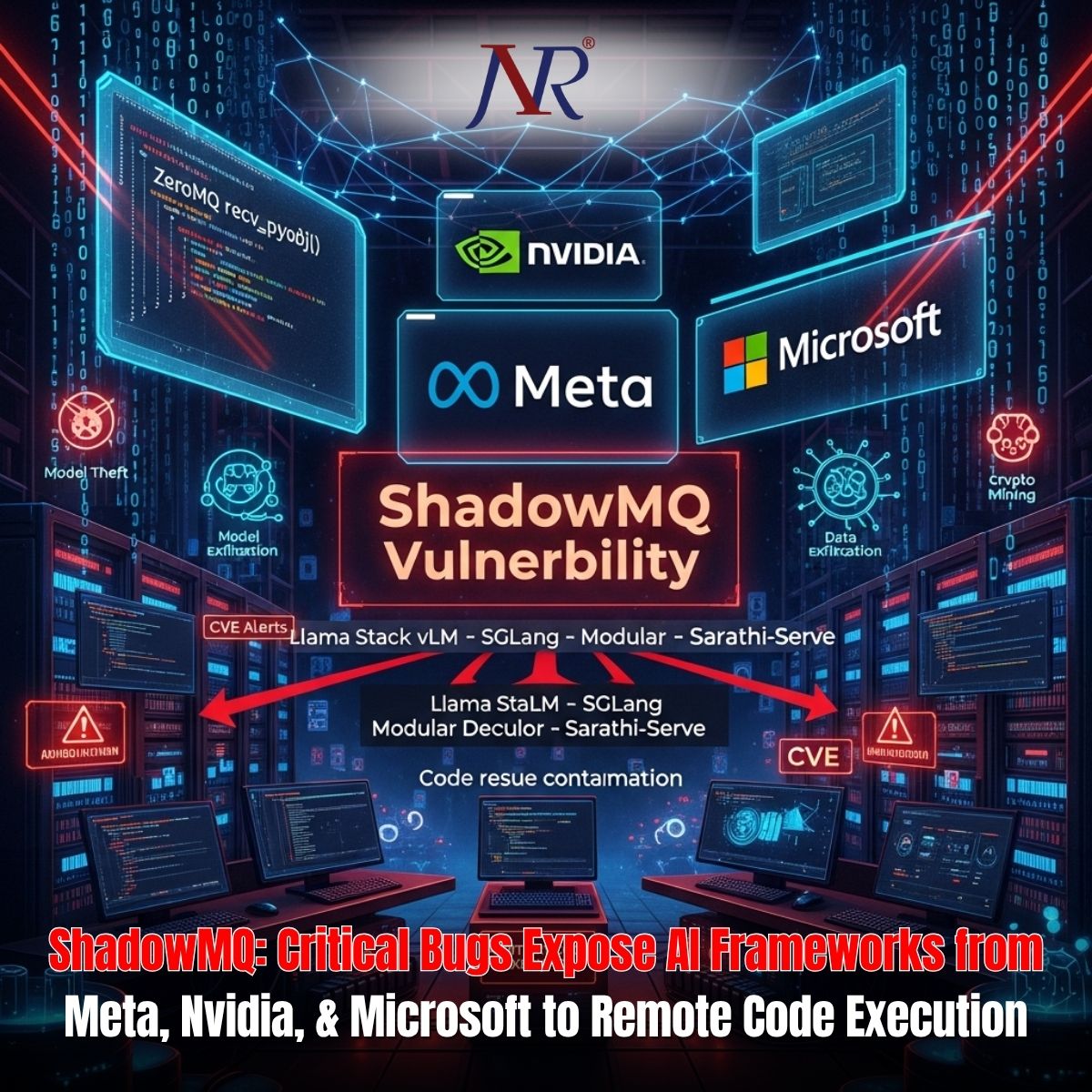

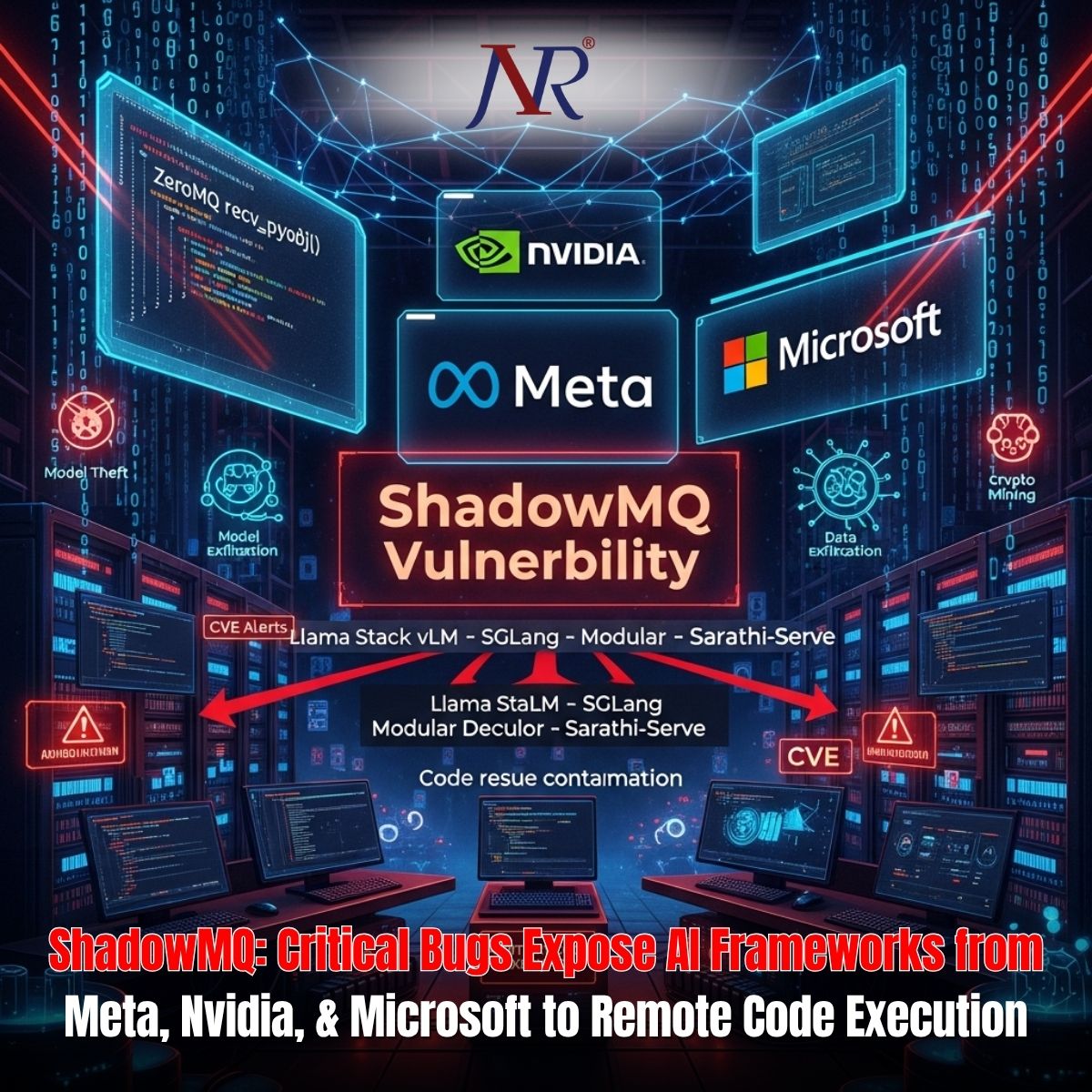

Cybersecurity researchers at Oligo Security have uncovered a systemic vulnerability pattern dubbed "ShadowMQ" that has propagated across multiple enterprise AI inference frameworks from Meta, Nvidia, Microsoft, and open-source projects including vLLM and SGLang. The vulnerabilities stem from unsafe code patterns copied directly between projects, demonstrating how security flaws can rapidly contaminate an entire ecosystem through code reuse without proper security validation.

At the heart of all identified vulnerabilities lies a dangerous architectural pattern: the use of ZeroMQ's recv_pyobj() method combined with Python's pickle module to deserialize data received over unauthenticated network sockets. This combination is fundamentally unsafe because Python's pickle module can execute arbitrary code during deserialization—a design choice acceptable for controlled environments but catastrophic when exposed to untrusted network sources.

The vulnerability originates in Meta's Llama Stack framework (CVE-2024-50050, CVSS score: 6.3-9.3), which exposed ZeroMQ sockets over the network and used pickle deserialization on incoming data without authentication or data validation. This flaw created a direct pathway for attackers to execute arbitrary code on systems running vulnerable inference engines.

Meta patched this vulnerability in October 2024, implementing JSON-based serialization as a safer replacement. However, the damage to the broader ecosystem had already begun through widespread code reuse.

Researchers discovered that developers across multiple organizations had directly copy-pasted the vulnerable code from Meta's Llama Stack into their own projects, perpetuating the identical security flaw. Evidence shows that:

vLLM (CVE-2025-30165, CVSS score: 8.0) copied code from Meta's vulnerable implementation, with file headers explicitly documenting "Adapted from Llama Stack.

Modular Max Server (CVE-2025-60455) borrowed vulnerable logic from both vLLM and SGLang, further propagating the flawed pattern.

SGLang and other frameworks replicated the unsafe patterns independently, suggesting a common architectural approach across AI inference server development without adequate security review.

This represents a critical failure in secure code reuse practices. Rather than implementing communication layers fresh with security in mind, developers treated vulnerable code as reference architecture, contaminating their own implementations.

The vulnerability pattern has been assigned multiple CVE identifiers across affected frameworks:

| Framework | CVE ID | CVSS Score | Status |

|---|---|---|---|

| vLLM | CVE-2025-30165 | 8.0 | Addressed (V1 engine default) |

| NVIDIA TensorRT-LLM | CVE-2025-23254 | 8.8 | Fixed (version 0.18.2+) |

| Modular Max Server | CVE-2025-60455 | N/A | Fixed |

| SGLang | N/A | N/A | Incomplete fixes |

| Microsoft Sarathi-Serve | N/A | N/A | Unpatched |

Vulnerable inference servers form the backbone of enterprise AI infrastructure, processing sensitive customer data, proprietary model weights, and confidential prompts. A successful exploitation of these vulnerabilities enables attackers to execute arbitrary code on GPU clusters, creating multiple attack vectors:

Model Theft:Direct access to proprietary large language models and weights worth millions in development investment.

Data Exfiltration:Extraction of customer data, training datasets, and confidential business intelligence processed by inference engines.

Privilege Escalation:Compromise of single nodes could facilitate lateral movement throughout AI infrastructure clusters.

Crypto Mining:Installation of GPU miners converting compute infrastructure into financial assets for attackers.

Supply Chain Compromise:Compromised inference engines could be leveraged to distribute malware downstream to customers relying on affected systems.

Oligo researchers identified thousands of exposed ZeroMQ sockets on the public internet, some explicitly tied to inference clusters running vulnerable frameworks. This means enterprises operating unpatched inference engines face active exploitation risk from internet-based attackers without requiring sophisticated reconnaissance.

Large enterprises including xAI, AMD, Nvidia, Intel, LinkedIn, Cursor, Oracle Cloud, and Google Cloud have adopted vulnerable SGLang versions, suggesting widespread exposure across critical infrastructure.

Immediate patching is essential. Organizations should upgrade to:

Meta Llama Stack version 0.0.41 or later

Nvidia TensorRT-LLM version 0.18.2 or later

vLLM version 0.8.0 or later

Modular Max Server version 25.6 or later

Broader architectural changes include restricting pickle usage with untrusted data, implementing HMAC and TLS authentication for ZeroMQ communications, and establishing secure code review processes for community contributions and code reuse scenarios.

The ShadowMQ pattern exposes fundamental challenges in rapidly evolving open-source AI ecosystems. Projects moving at exceptional speed often prioritize features over security, borrowing architectural components from peers without adequate security validation. When code reuse includes unsafe patterns, the consequences ripple outward exponentially.

Oligo researchers emphasize that this vulnerability pattern likely represents only one instance of broader systemic security gaps within AI inference frameworks. The emergency response required to patch multiple vendors simultaneously underscores the need for industry-wide security standards, collaborative threat intelligence, and mandatory security reviews for code reuse in critical infrastructure.